GLM-5 is Here: The Open-Source Giant That Rivals GPT-4o and Gemini 3.0

The landscape of Large Language Models (LLMs) shifts fast, but every so often, a release forces everyone to stop and take notice. This week, that disruption comes from Zhipu AI (Z.ai) with the official launch of GLM-5.

Entering the arena just ahead of the Lunar New Year, GLM-5 isn't just another incremental update. It is a massive leap forward in open-source capability, boasting a 744-billion parameter Mixture-of-Experts (MoE) architecture that is turning heads across the industry. Early benchmarks suggest it doesn’t just compete with closed-source frontier models like GPT-4o and Gemini 3.0 Pro—it trades blows with them, particularly in coding and complex agentic workflows.

At Siray.AI, our mission is to bring you the best tools the AI world has to offer, the moment they are available. We are thrilled to announce that GLM-5 is already integrated into our platform. Whether you are a developer looking for superior debugging skills or a business automating complex office tasks, this model demands your attention.

The GLM-5 Breakdown: Under the Hood

What makes GLM-5 special? It’s not just raw size; it’s efficiency. While the total parameter count sits at a staggering 744B, the model utilizes a sparse activation method (MoE), meaning only about 40B parameters are active during any given inference. This allows for reasoning capabilities that rival the biggest models on the market while maintaining a surprisingly agile inference speed.

Key Features at a Glance:

- Unified Multimodal Architecture: Unlike previous generations that stitched different modules together, GLM-5 processes text, code, and visuals in a single, deeply integrated pathway.

- Agentic Powerhouse: The model is specifically optimized for "Agentic Engineering." It can handle long-running tasks involving 200 to 300 sequential tool calls without losing the plot—a common failure point for lesser models.

- Massive Context: With a 200k token context window and support for up to 128k output tokens, it is built for deep work, not just quick chats.

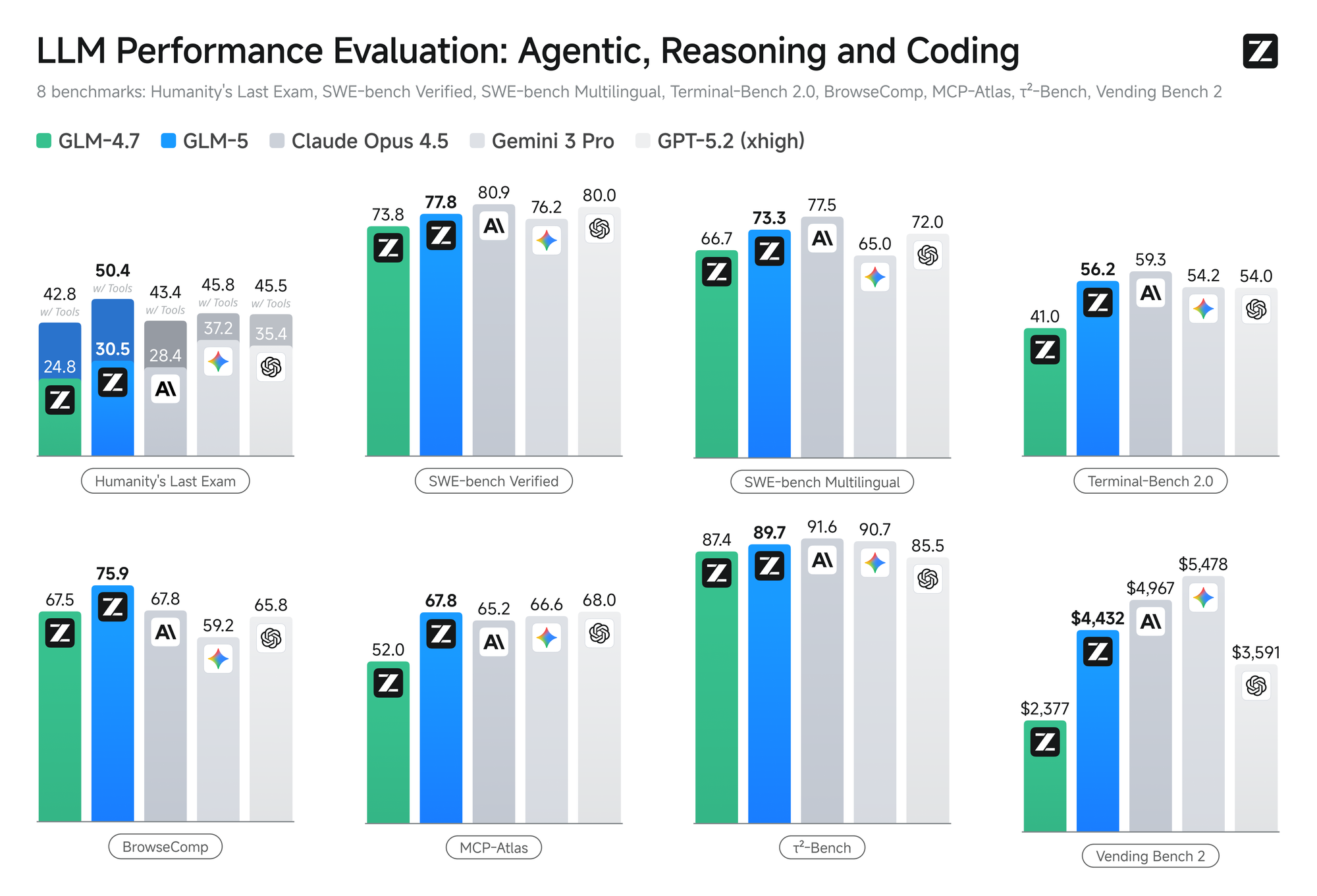

Benchmarking the Beast: GLM-5 vs. The World

We know what you’re asking: How does it actually perform? We looked at the data from trusted third-party evaluators like Artificial Analysis, and the results are telling.

1. Coding Capabilities In the realm of software engineering, GLM-5 is a revelation. On the SWE-bench Verified benchmark, which tests a model's ability to solve real-world GitHub issues, GLM-5 scored an impressive 77.8%. To put that in perspective, this score places it neck-and-neck with Anthropic’s Claude 3.5 Sonnet (often cited as the coding king) and ahead of many proprietary competitors.

For developers using Siray.AI, this means you can rely on GLM-5 for backend refactoring, complex debugging, and even generating full-stack applications from natural language prompts with a higher success rate than ever before.

2. Reasoning and General Intelligence On the Artificial Analysis Intelligence Index, GLM-5 secured a score of 50, a significant milestone that positions it as a leader among open-weights models. It outperforms the "GPT-5.2 Codex (xhigh)" on several specific coding and reasoning tasks and holds its own against Gemini 3.0 Pro in general knowledge retrieval.

3. Agentic Workflow This is where GLM-5 truly shines. In tests like BrowseComp (web retrieval) and Vending-Bench (long-term task management), GLM-5 demonstrated an uncanny ability to plan, execute, and self-correct. Where other models might get stuck in a loop after the 10th step of a complex instruction, GLM-5 pushes through to the finish line.

Real-World Use Cases on Siray.AI

So, how do you actually apply this power? Since Siray.AI allows you to switch between models seamlessly, here is where we recommend toggling over to GLM-5:

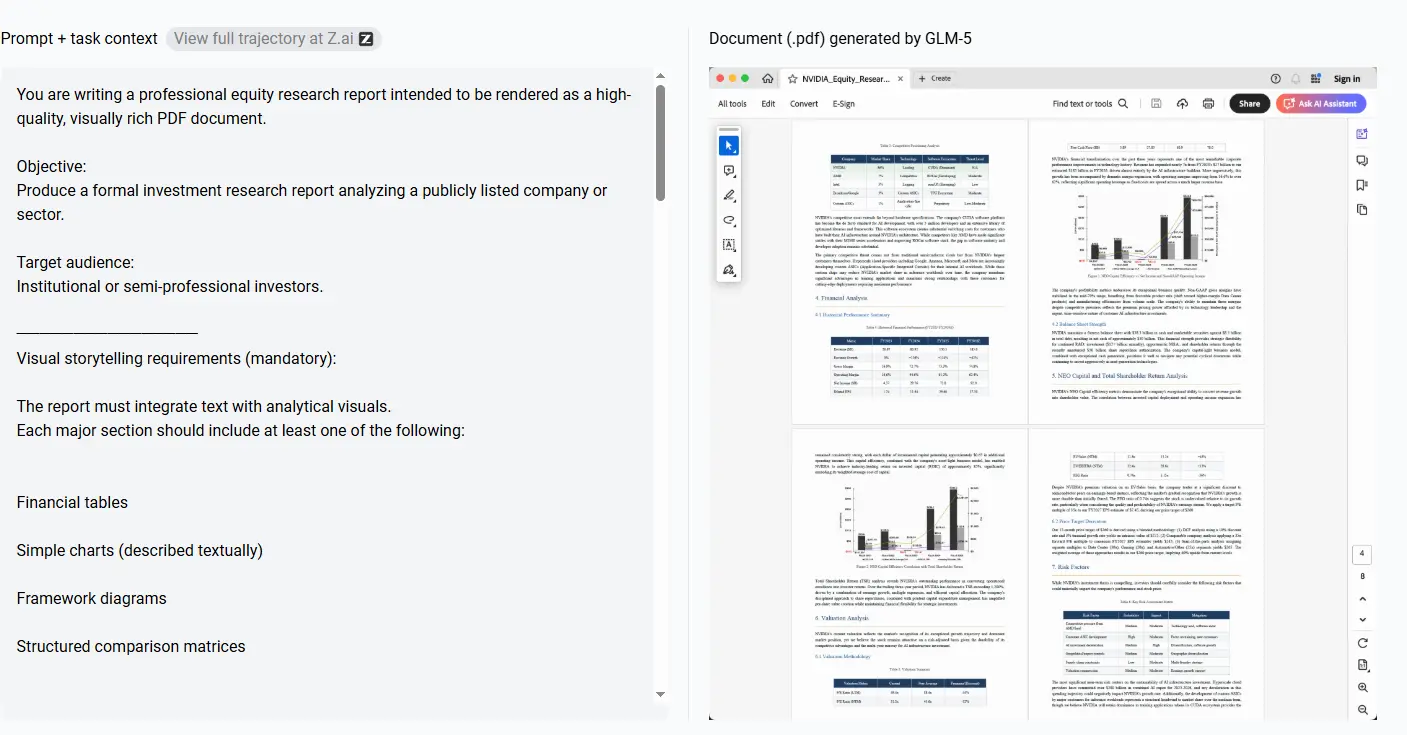

1. The "Super-Employee" Agent Imagine you need to research a competitor, summarize their last ten blog posts, and draft a strategy document based on that data.

- Old Way: You prompt a model, it hallucinates half the links, and you have to hand-hold it through the summary.

- With GLM-5 on Siray.AI: You give the high-level instruction. GLM-5’s agentic capabilities allow it to browse, read, filter, and synthesize the information over many steps, eventually producing a Word-document-ready report.

2. Complex Code Refactoring If you are dealing with a legacy codebase that looks like spaghetti, GLM-5 is your new best friend. Its 128k output limit means it can rewrite entire modules in one go, rather than giving you truncated code blocks. Its high passing rate on coding benchmarks gives you confidence that the code will actually run.

3. Multimodal Analysis Upload a video of a user interface interaction or a complex chart. GLM-5’s unified architecture allows it to understand the visual context better than models that treat vision as an afterthought. It can describe UI flows or extract data from messy Excel screenshots with high precision.

Why Use GLM-5 on Siray.AI?

You might be thinking, "It's open source, can't I run it myself?" Technically, yes—if you have a cluster of H100 GPUs lying around. A 744B parameter model is incredibly hardware-intensive.

By using GLM-5 through Siray.AI, you get:

- Instant Access: No download times, no server configuration. You are up and running in seconds.

- Comparison Mode: Not sure if GLM-5 is right for a specific prompt? Use our split-screen feature to compare its output directly against GPT-4o or Claude.

- Enterprise Security: We wrap the model in our enterprise-grade security layer, ensuring your data remains private and your workflows are compliant.

- Cost Efficiency: Running massive MoE models personally is expensive. We optimize the inference costs, passing the savings on to you.

Summary

The release of GLM-5 by Zhipu AI marks a pivotal moment in 2026. It proves that the gap between open-source and proprietary models isn't just closing—it’s practically gone. With state-of-the-art coding skills, massive context windows, and agentic reasoning that beats the industry giants, GLM-5 is a tool that serious professionals cannot afford to ignore.

Whether you are a developer, a content strategist, or a business leader, this model offers a level of nuance and capability that was pure science fiction just a few years ago.

Ready to see the difference for yourself?

Don't just take our word for the benchmarks. You can test GLM-5 alongside the world's other top models right now.