GPT 5.3 Codex: Architecture, Benchmarks, and Practical Deployment Insights

Introduction

GPT 5.3 Codex is built for one thing: working with code in environments where correctness matters.

It is not positioned as a conversational flagship model, and it does not try to compete on multimodal breadth. Instead, it focuses on structured generation, repository-aware reasoning, and predictable behavior in engineering pipelines. That distinction matters. Many general-purpose models can write code. Far fewer can do it consistently under production constraints.

For teams building IDE copilots, internal automation tools, or agent-based systems that generate and execute code, GPT 5.3 Codex represents a shift toward reliability over novelty.

This article examines how it performs, where it fits, and what developers should realistically expect when integrating it.

Model Characteristics and Design Direction

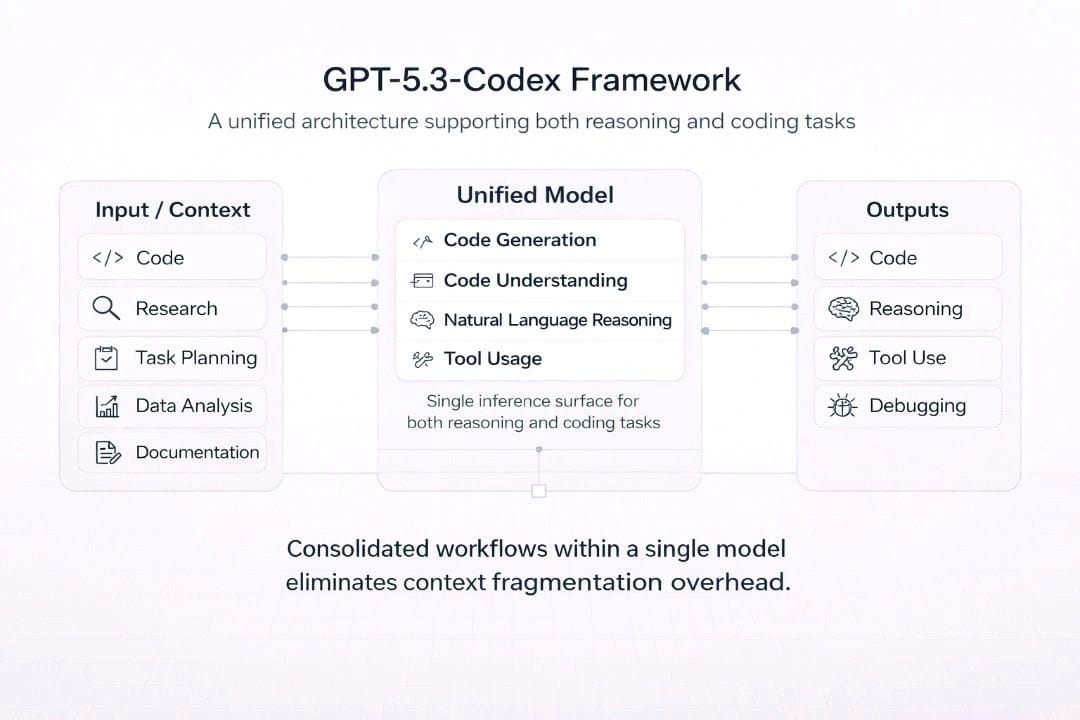

GPT 5.3 Codex retains the transformer backbone typical of large language models, but its training emphasis is noticeably different from general conversational systems.

Instead of optimizing for broad dialogue fluency, the model is tuned around:

- Multi-function reasoning

- Dependency tracking

- Syntax consistency

- Structured output fidelity

That design choice shows up quickly during testing. It is less verbose. It tends to follow explicit formatting constraints more closely. And it produces fewer partially complete code blocks compared to earlier Codex-style iterations.

The improvements are not dramatic in isolation. They become noticeable when the model is placed inside systems that depend on machine-readable outputs.

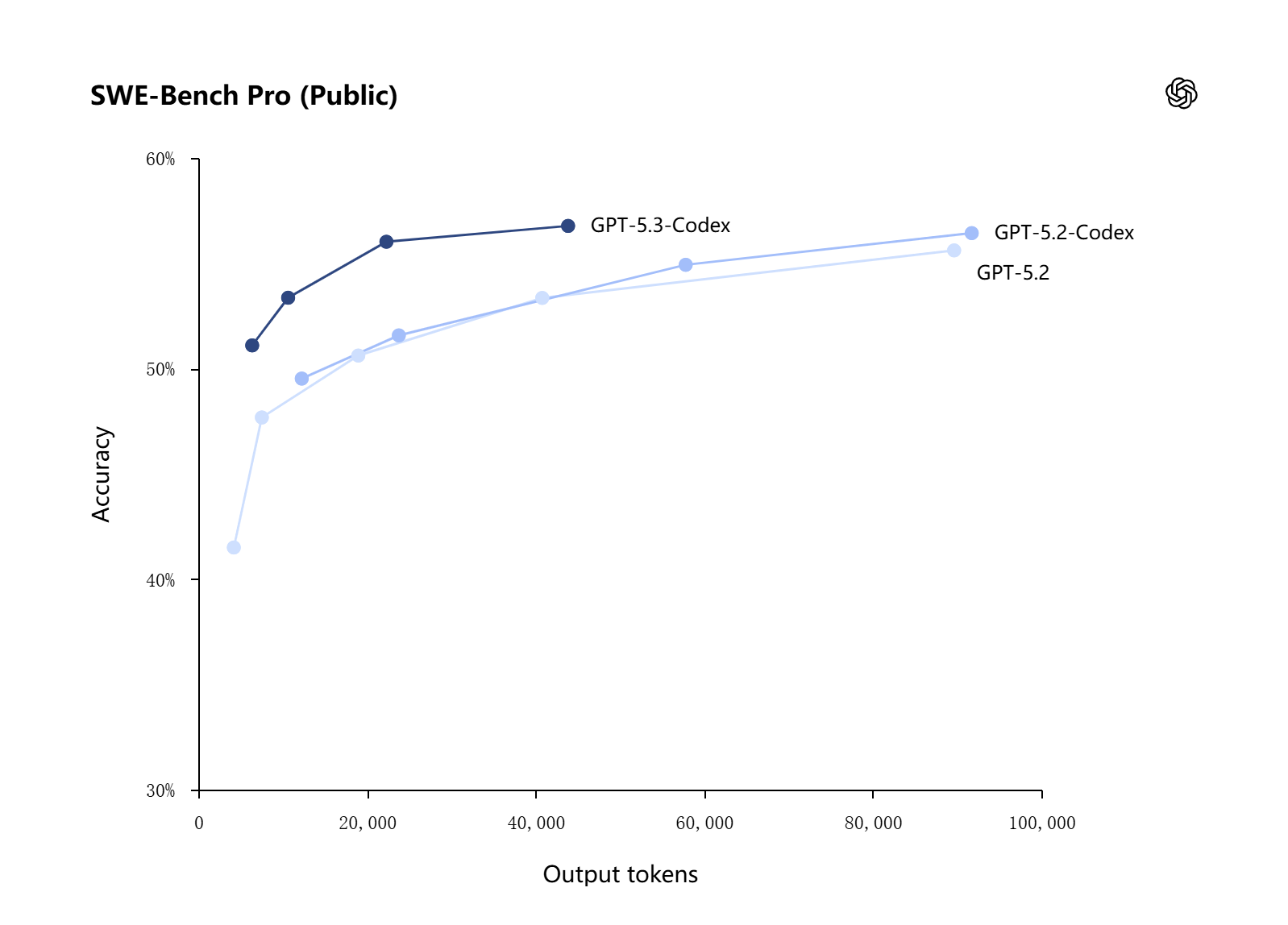

Performance and Benchmark Standing

Public benchmark tracking platforms, including Artificial Analysis, show GPT 5.3 Codex improving on pass rate metrics across standard code evaluation suites.

On HumanEval-style tasks, the gains appear incremental rather than explosive. However, in structured prompt environments, the model reduces formatting drift and incomplete function definitions. That is often more valuable in practice than a small percentage increase in theoretical accuracy.

Latency remains within the expected range for mid-to-large models. It is not the fastest option available, but it scales consistently under concurrent load.

In production systems, consistency often outweighs peak speed. A slightly slower model that avoids malformed output can reduce downstream validation overhead.

How It Compares in the Current Landscape

To understand its practical positioning, it is helpful to compare GPT 5.3 Codex with two well-known alternatives: GPT-4o and Claude Sonnet.

Compared with GPT-4o

GPT-4o is broader. It handles multimodal inputs and demonstrates stronger long-form reasoning across varied domains. If your workload includes visual input or cross-domain reasoning, GPT-4o remains more capable.

In pure code-focused pipelines, however, GPT 5.3 Codex often feels more restrained. It adheres to explicit instructions without adding unnecessary commentary. For automation scenarios where responses are parsed programmatically, that restraint is useful.

The trade-off is clear: GPT-4o offers versatility. GPT 5.3 Codex offers narrower but steadier behavior in engineering contexts.

Compared with Claude Sonnet

Claude Sonnet performs well in explanation-heavy workflows. It tends to generate readable reasoning around code decisions and can be strong in refactoring discussions.

GPT 5.3 Codex, by contrast, emphasizes execution-ready outputs. When asked to generate configuration files or structured schemas, it is less likely to deviate from requested formats.

The distinction becomes visible in CI environments. Systems that validate strict JSON or YAML schemas benefit from models that do not improvise formatting.

Where GPT 5.3 Codex Works Best

IDE-Level Code Assistance

Inside development environments, GPT 5.3 Codex produces concise completions. It tracks variable names more reliably across medium-length contexts and avoids re-declaring already defined structures.

The improvements are subtle but noticeable over extended sessions.

CI and Infrastructure Automation

Automation pipelines expose formatting weaknesses quickly. A missing bracket can halt deployment.

GPT 5.3 Codex performs reliably when generating:

- Dockerfiles

- Infrastructure templates

- REST endpoint scaffolding

- Database schema migrations

It does not eliminate the need for validation. But it reduces the rate of syntactic errors compared to earlier generations.

Agent-Based Toolchains

Agent systems increasingly rely on structured intermediate outputs. The model’s tendency to respect formatting constraints makes it suitable for tool-calling frameworks.

Rather than producing conversational filler, GPT 5.3 Codex focuses on task completion. That improves reliability in chained execution loops.

When accessed through Siray.ai, teams can evaluate GPT 5.3 Codex alongside other models without modifying core integration logic. The unified API approach simplifies comparison testing and reduces migration friction.

Documentation and Code Explanation

Although not optimized for narrative writing, GPT 5.3 Codex can generate technical documentation from source files. It summarizes function behavior accurately and produces usage examples that reflect parameter constraints.

This makes it useful for internal developer portals or automated documentation workflows.

Final Assessment

GPT 5.3 Codex is not designed to impress with broad conversational creativity. Its strength lies in structured, execution-ready output within engineering workflows.

It offers:

- Stable code generation

- Improved formatting consistency

- Predictable scaling behavior

- Practical cost positioning

For teams building copilots, DevOps automation, or agent-driven development systems, it represents a focused and pragmatic option.

GPT 5.3 Codex is available for testing and integration through Siray.ai’s unified API platform.