HappyHorse 1.0 — The Mystery #1 AI Video Model

HappyHorse-1.0 just hit the top spot on the Artificial Analysis Video Arena leaderboard — and unlike most mystery models, you can actually use it right now through Siray.ai.

How HappyHorse-1.0 Appeared on the Radar

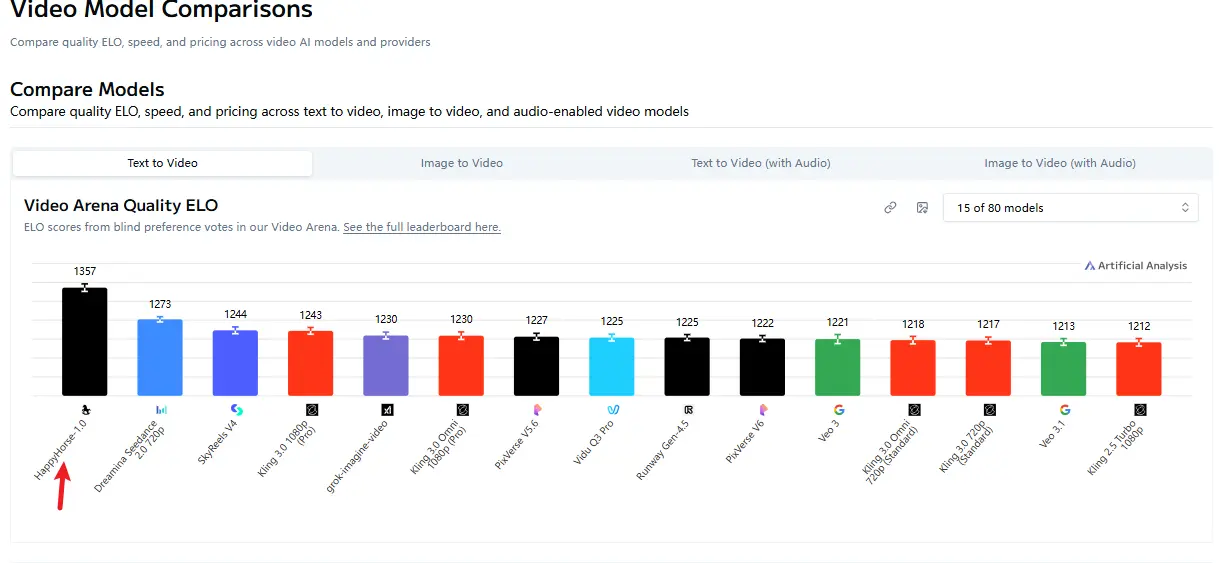

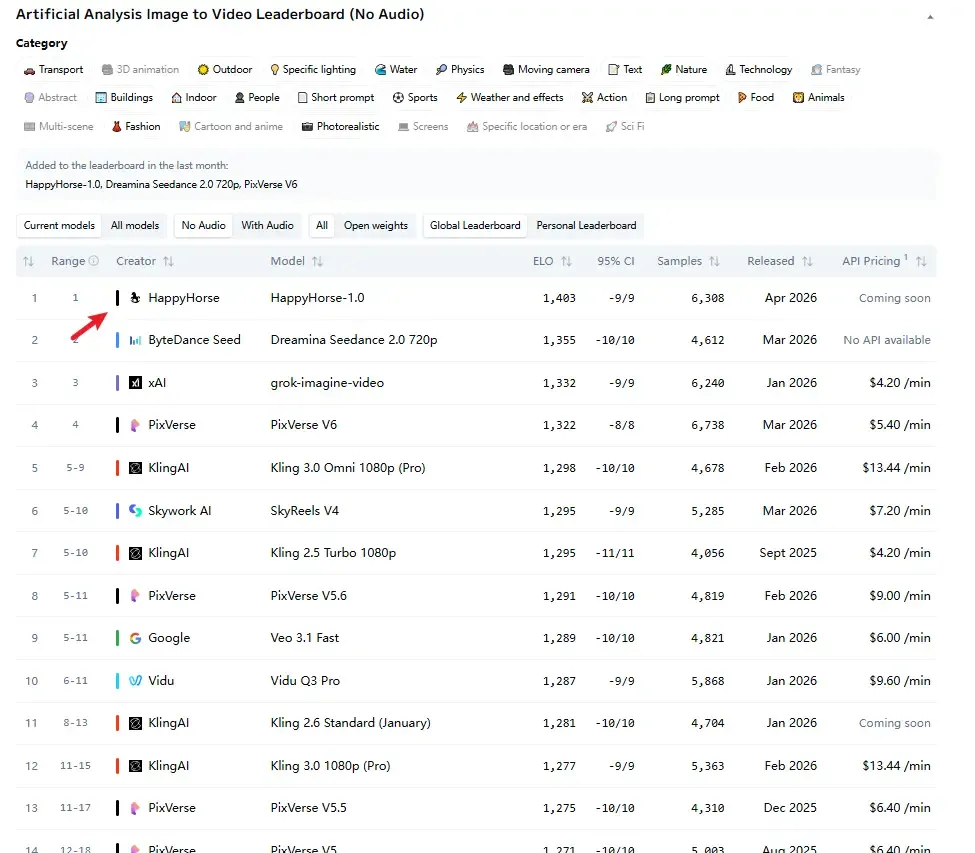

The Artificial Analysis Video Arena ranks models based on real blind user votes using an Elo system. No self-reported benchmarks, no cherry-picked examples — just side-by-side comparisons where users pick the better video without knowing which model created it.

As of April 2026, HappyHorse-1.0 sits at:

- #1 in Text-to-Video (no audio) — Elo 1333

- #1 in Image-to-Video (no audio) — Elo 1392

- #2 in both categories with audio

That puts it roughly 60 Elo points ahead of the previous leader, Seedance 2.0, in text-to-video. A meaningful gap in blind testing.

What We Know About the Model

According to its own site, HappyHorse-1.0 uses a unified single-stream Transformer architecture with 40 layers. It jointly processes text, image, video, and audio tokens in one pipeline — no separate models for different modalities.

It claims strong multilingual audio generation (Chinese, English, Japanese, Korean, German, French, and more) with decent lip-sync, plus solid motion quality and visual consistency.

Why This Matters for Builders

Most #1 models on video leaderboards stay locked behind waitlists or private access. HappyHorse-1.0 is different — we’ve integrated it so you can start experimenting immediately.

No need to wait for official APIs or deal with unstable endpoints. Just switch the model name in your code and generate.

All accessible through one single OpenAI-compatible API.

Start generating with HappyHorse 1.0 now: