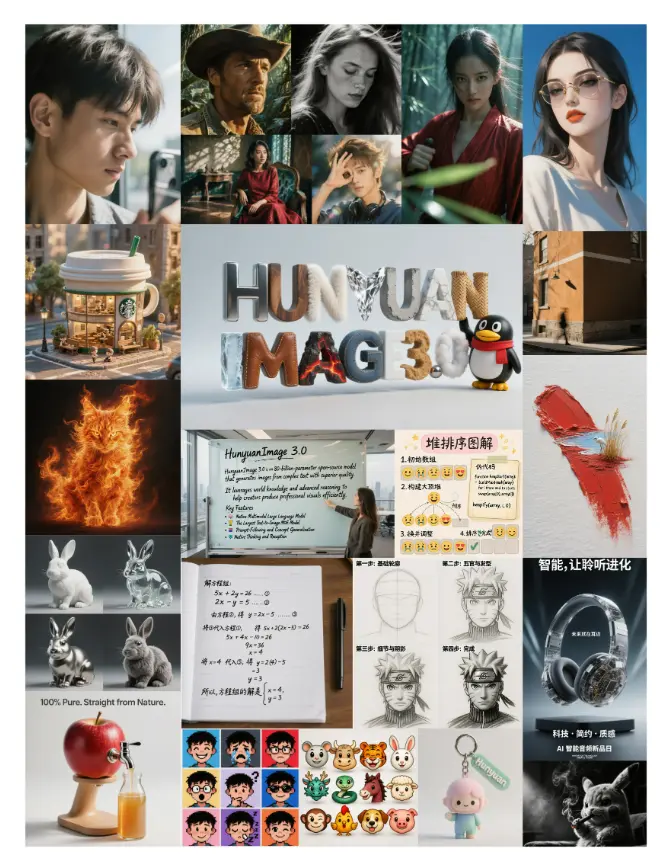

Hunyuan Image 3 Instruct T2I

A Practical Look at Flexible and High Quality Image Generation

Introduction

Text to image generation has matured quickly, but developers still run into the same friction points. Outputs can be inconsistent, prompts require constant tuning, and many models enforce strict content filtering that limits creative use cases.

Hunyuan Image 3 Instruct is part of a newer generation of models trying to address these issues. Instead of focusing only on visual quality, it emphasizes instruction following and prompt clarity, while also supporting a broader range of image generation scenarios.

For developers building creative tools or content platforms, this combination changes how image generation can be integrated into products.

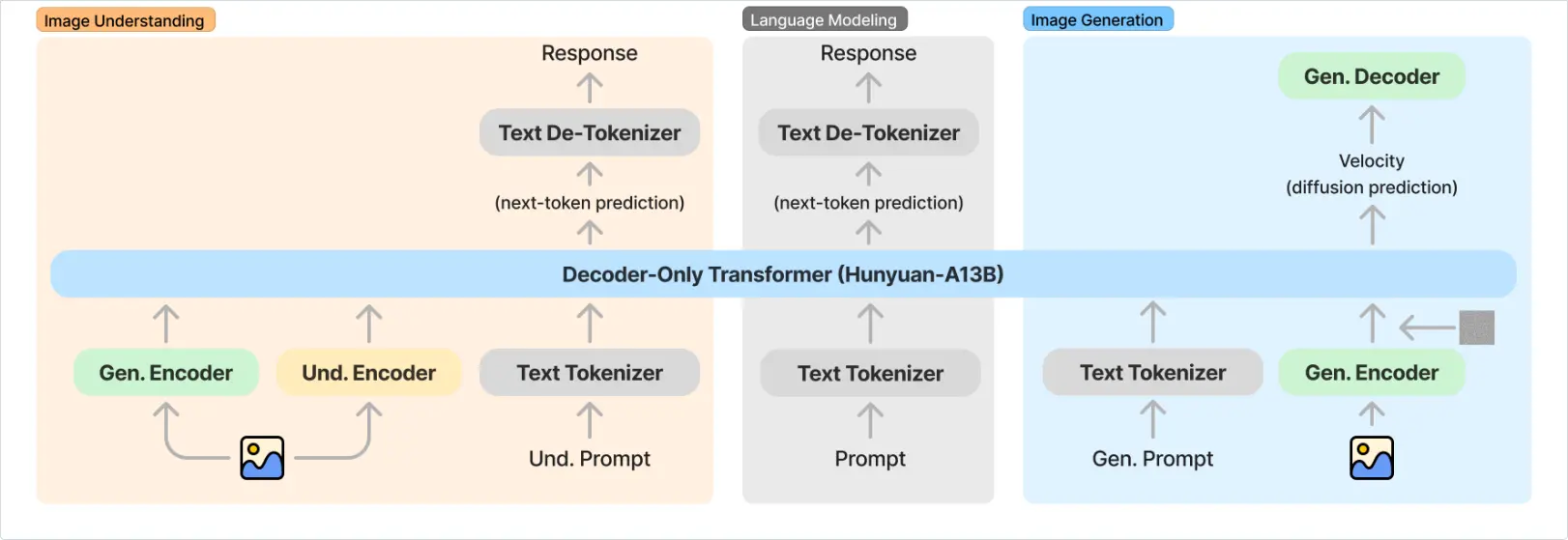

Model Overview

Hunyuan Image 3 Instruct is an instruction tuned text to image model. The key difference lies in how it interprets prompts.

Earlier diffusion models often rely on prompt engineering techniques. Developers add style tokens, weights, and modifiers to guide outputs. This works, but it introduces instability.

Instruction tuned models reduce that complexity.

They respond better to direct instructions such as:

- change composition

- adjust lighting

- modify subject details

- refine visual style

Another notable aspect is its more flexible generation policy. Compared to heavily restricted models, Hunyuan Image 3 Instruct allows broader prompt interpretation, which is useful for platforms that need fewer constraints on visual creativity.

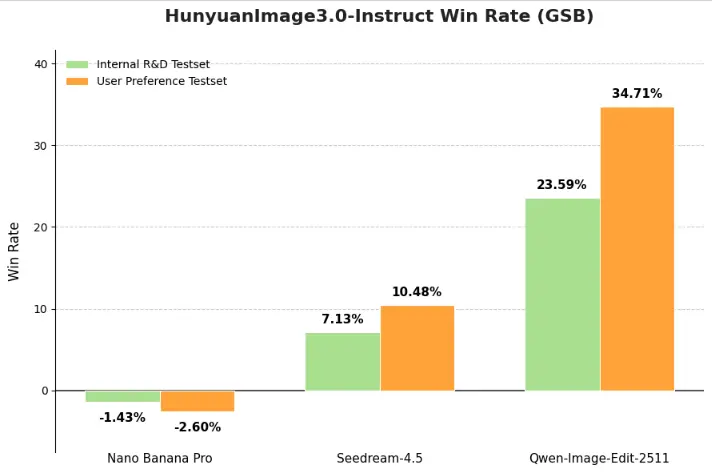

Performance and Benchmarks

While official benchmark comparisons are still evolving, early evaluations suggest strong performance in:

- prompt adherence

- visual consistency

- style control

Compared to older models, Hunyuan Image 3 Instruct produces fewer unexpected artifacts when prompts include multiple constraints.

This is particularly noticeable when generating complex scenes.

Comparison with Similar Models

Compared with Stable Diffusion:

- Hunyuan is easier to control via natural language

- Stable Diffusion offers more local customization

Compared with Midjourney:

- Midjourney excels in artistic output

- Hunyuan provides better instruction alignment

Compared with DALL E style models:

- Hunyuan offers more flexible prompt handling

- DALL E focuses more on safety constraints

Use Cases

Creative Platforms

Platforms that allow user generated content benefit from models that support broader prompt interpretation.

Character Design

Instruction based prompts make it easier to generate consistent characters across variations.

Content Generation

Teams generating marketing visuals or blog assets can rely on more predictable outputs.

Summary

Hunyuan Image 3 Instruct focuses on:

- better instruction following

- high quality outputs

- flexible generation scenarios

The model is available on Siray.ai for testing and integration through a unified API.