Hunyuan Image 3 Instruct vs Traditional T2I Models

Introduction

Most text to image models today fall into two categories. Some prioritize output quality but enforce strict content filters. Others offer more flexibility but require heavy prompt engineering to get usable results.

Hunyuan Image 3 Instruct sits somewhere in between, but with a noticeable shift in philosophy. Instead of forcing users to work around limitations, it focuses on following instructions more directly while allowing a broader range of visual outputs.

This difference may not seem obvious at first. It becomes much clearer when you try to generate images that require precise control or fall outside heavily filtered categories.

How Traditional T2I Models Behave

Developers who have worked with earlier diffusion models are familiar with the usual workflow.

You write a long prompt. Then you add modifiers. Then you adjust weights. Sometimes you repeat the process several times before getting a usable result.

Even then, outputs can vary significantly.

Another common constraint is filtering. Many hosted models apply strict safety layers that block or alter prompts. While that makes sense for public-facing platforms, it can be limiting in more experimental or creative environments.

This is where friction tends to build up.

What Changes with Hunyuan Image 3 Instruct

Hunyuan Image 3 Instruct approaches the problem differently.

Instead of relying on prompt tricks, it is trained to interpret direct instructions. You can describe what you want more naturally, without stacking style keywords.

For example, instead of writing a heavily engineered prompt, you can describe:

- scene composition

- lighting conditions

- subject behavior

- visual tone

The model tends to follow these instructions more consistently.

Another noticeable difference is its less restrictive generation behavior. Prompts that might be blocked or heavily modified in other systems are more likely to be interpreted as intended.

For developers building platforms that require broader creative flexibility, this is often a deciding factor.

Unrestricted Image Generation in Practice

In practical terms, “unrestricted” does not mean uncontrolled. It means the model is less aggressive in filtering outputs and allows a wider range of prompts to be processed.

This can be useful in scenarios such as:

- artistic experimentation

- stylized character design

- mature themed visual content

- niche creative communities

The ability to generate images without constant prompt rejection reduces friction significantly.

Developers still have the option to implement their own moderation layer if needed. That control shifts from the model provider to the application level.

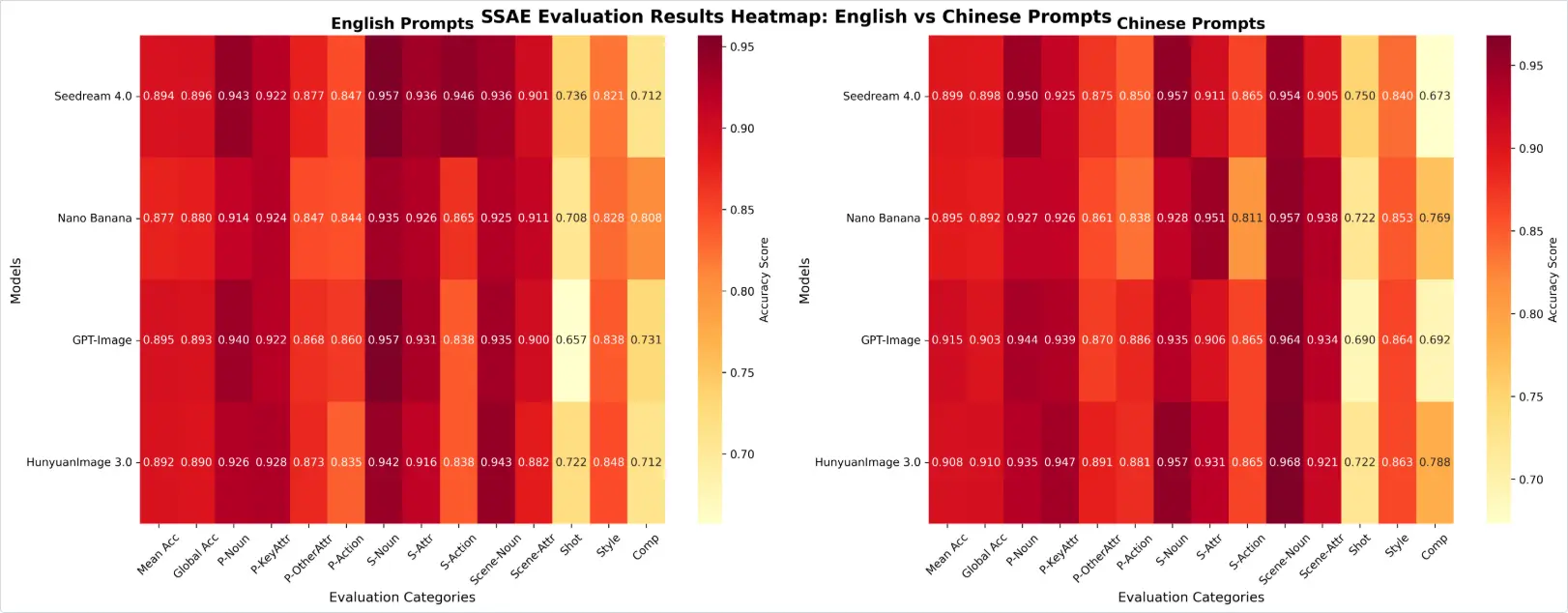

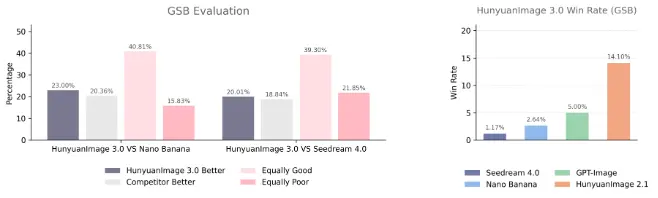

Comparison with Other Models

Compared with Stable Diffusion:

- Stable Diffusion offers deep customization and open weights

- Hunyuan provides more consistent instruction following

Compared with Midjourney:

- Midjourney produces highly stylized images

- Hunyuan gives more control over structure and prompt intent

Compared with more restricted hosted APIs:

- Hunyuan allows broader prompt interpretation

- Other APIs may block or alter outputs more aggressively

These differences become more relevant when building applications rather than running isolated prompts.

Developer Use Cases

Creative Platforms

Platforms that allow user-generated visuals often require flexibility. Strict filtering can limit user engagement, especially in communities focused on artistic expression.

Character and Concept Design

Design workflows benefit from models that can handle nuanced instructions without rewriting prompts repeatedly.

Content Generation Systems

Automated systems that generate visuals at scale need consistency. Instruction following reduces the number of failed generations.

Developer Notes

API Access

Hunyuan Image 3 Instruct can be accessed through unified API platforms such as Siray.ai. This makes it easier to test alongside other models without changing integration logic.

Prompt Strategy

Short, clear instructions tend to perform better than long prompt chains. Over-specifying can sometimes reduce output quality.

Moderation Considerations

Since the model supports more flexible outputs, developers may want to implement:

- custom filtering

- user-level controls

- content guidelines

This approach offers more control compared to fully restricted APIs.

Summary

Hunyuan Image 3 Instruct is less about raw image quality improvements and more about control and flexibility.

Its strengths include:

- clearer instruction following

- reduced prompt engineering

- broader generation range

For developers building creative tools, that combination is often more valuable than incremental quality gains.

The model is available on Siray.ai for testing and integration through a unified API.