Nano Banana 2: A Practical Look at Performance, Benchmarks, and Deployment Trade-offs

Introduction

Nano Banana 2 is the second major release in the Nano Banana model line, built with a clear goal: improve reasoning consistency and coding reliability without significantly increasing inference cost.

Instead of chasing frontier-level scale, this version focuses on practical improvements. The update refines instruction adherence, extends usable context, and reduces formatting instability — all areas that matter more in production systems than raw benchmark headlines.

For teams running chat systems, internal automation tools, or lightweight agents, Nano Banana 2 sits in an interesting position. It is not a flagship model. It is not ultra-compact either. It occupies the middle ground — where most real workloads actually live.

This article looks at how it performs, where it fits, and what developers should consider before integrating it.

What Changed in Nano Banana 2

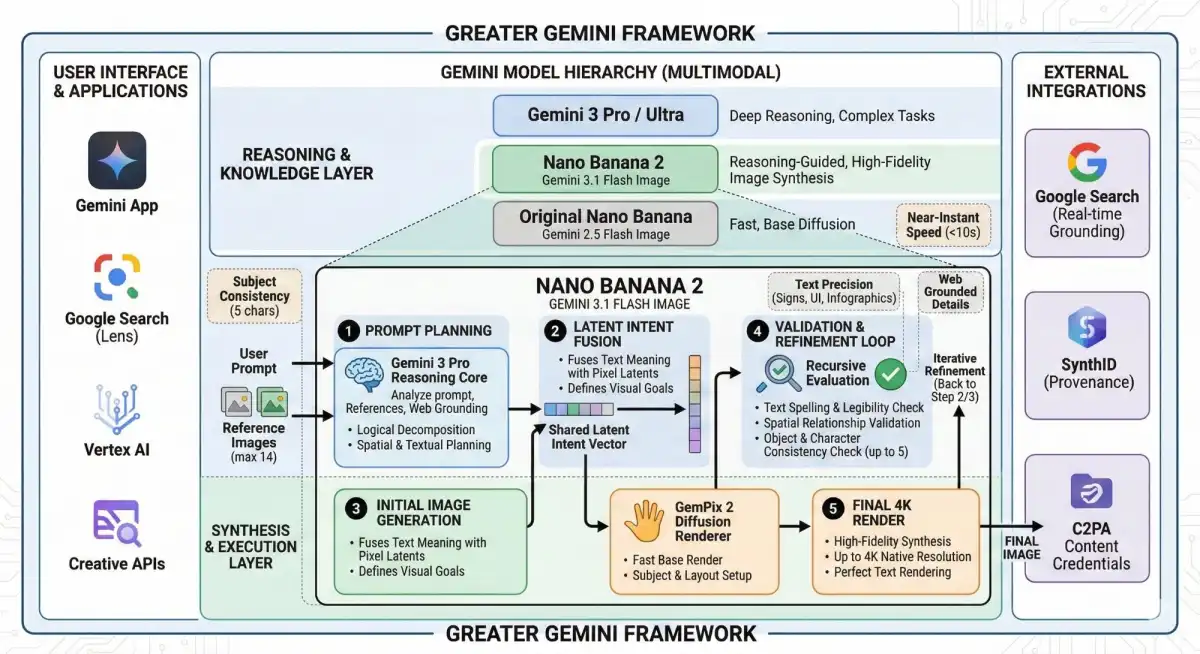

At a high level, Nano Banana 2 keeps the transformer-based foundation of the original model but adjusts training strategy and inference optimization rather than dramatically increasing parameter count.

The differences are subtle but noticeable in real use:

- More consistent instruction following

- Lower variance in structured outputs

- Improved code generation accuracy

- Better stability across longer prompts

The architecture itself is not publicly described in extreme detail, but based on behavior and latency characteristics, the focus appears to be on efficiency tuning rather than scale expansion.

That design choice matters. Larger parameter counts often improve benchmark scores, but they also increase cost and latency. Nano Banana 2 seems optimized for predictable production behavior instead of leaderboard performance.

Benchmark Performance

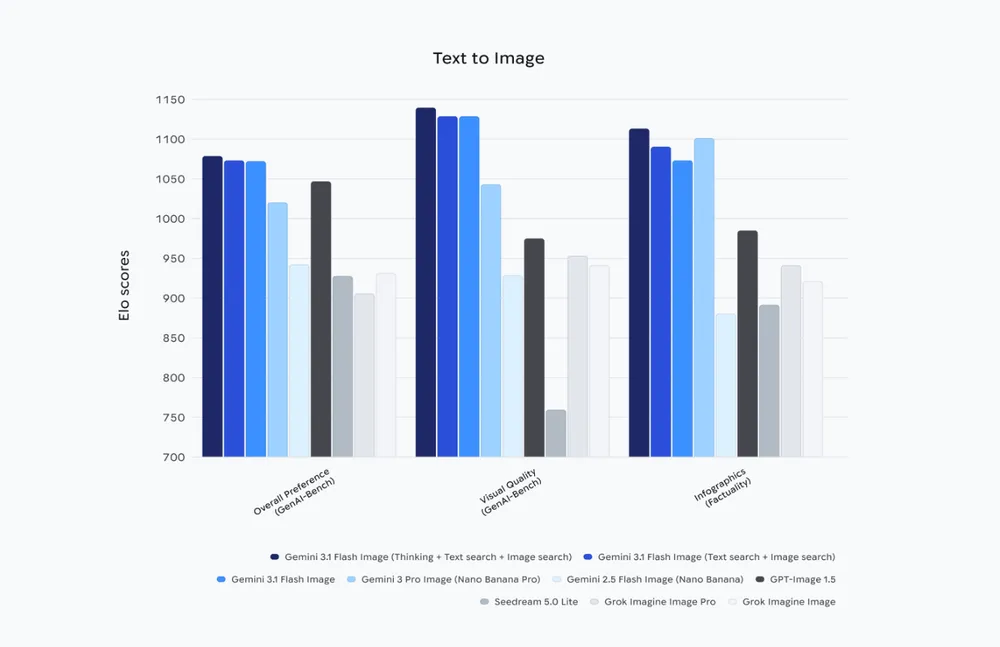

Public benchmark tracking platforms such as Artificial Analysis show incremental but meaningful improvements over the previous version in reasoning and coding tasks.

In instruction-following benchmarks, Nano Banana 2 reduces formatting errors compared to Nano Banana 1. This is especially visible in JSON generation tasks, where bracket mismatches and structural drift were more common in the earlier release.

Coding benchmarks show better pass@1 performance in common scripting tasks. The improvement is not dramatic, but it is consistent.

Compared with larger models, benchmark scores remain moderate. However, the gap narrows in structured reasoning tasks where prompt clarity matters more than deep abstract reasoning.

Latency tests indicate that Nano Banana 2 maintains stable token generation speed under sustained load. It does not spike unpredictably, which is important for real-time systems.

Cost per million tokens is positioned below most flagship-tier models. That alone makes it viable for high-volume SaaS applications.

Comparing Nano Banana 2 with Other Mid-Tier Models

To understand its position more clearly, it helps to compare it with two widely used alternatives: GPT-4o and Claude Haiku.

Against GPT-4o

GPT-4o remains stronger in multimodal reasoning and deeper logical tasks. If your system depends on image understanding or long multi-step reasoning chains, GPT-4o will generally outperform Nano Banana 2.

Where Nano Banana 2 competes more effectively is in cost-sensitive automation. For structured output tasks or predictable chat flows, the performance difference often narrows — while the pricing difference becomes noticeable.

In other words, GPT-4o is broader and more powerful. Nano Banana 2 is narrower but more economical.

Against Claude Haiku

Claude Haiku is optimized for low latency and conversational smoothness. In purely conversational experiences, Haiku may feel slightly more natural in tone.

Nano Banana 2, however, appears stronger in rigid formatting scenarios. If your system depends heavily on structured tool outputs or schema-bound responses, Nano Banana 2 tends to produce fewer structural deviations.

The choice between them often depends less on raw intelligence and more on workflow requirements.

Developer Considerations

Integration

Nano Banana 2 follows standard API-based interaction patterns: prompt input, temperature configuration, token limits, and response parsing.

Through Siray.ai, developers can access Nano Banana 2 using a single API key alongside other models. This simplifies infrastructure decisions. Instead of committing to one provider upfront, teams can benchmark performance in parallel.

This is particularly useful during early-stage evaluation.

Latency and Throughput

Latency is one of the model’s stronger attributes. It remains stable under moderate concurrency.

That stability is important for:

- Live chat applications

- Real-time copilots

- API-driven automation

In systems where response time consistency matters more than maximum reasoning depth, this characteristic becomes valuable.

Cost Strategy

Nano Banana 2’s pricing makes it suitable for high-frequency requests.

If your system processes thousands of short prompts per hour, cost per token becomes more important than absolute benchmark scores. In these environments, using a slightly smaller model often makes economic sense.

Siray.ai enables side-by-side cost comparison across models, allowing teams to quantify performance per dollar before scaling.

Where Nano Banana 2 Fits

Nano Banana 2 is not trying to redefine the AI landscape. It is not competing with frontier research models. Its strength lies in predictability.

It works well when:

- You need structured output reliability

- You care about inference cost

- You want consistent latency

- You are building SaaS-scale automation

It is less suitable when:

- You require advanced multimodal reasoning

- You need extremely deep chain-of-thought logic

- Creative writing quality is the primary goal

Understanding this positioning helps avoid mismatched expectations.

Evaluation Recommendations

Before deploying Nano Banana 2 in production, consider running controlled tests:

- Compare structured output error rates

- Measure latency under peak load

- Track cost per completed task

- Test long-context prompts

Using Siray.ai’s unified access layer, these experiments can be performed without rewriting integration logic for each model provider.

That flexibility shortens evaluation cycles and reduces migration risk.

Summary

Nano Banana 2 delivers incremental but practical improvements over its predecessor. It offers:

- More stable structured outputs

- Better coding consistency

- Predictable latency

- Controlled operational cost

It is best suited for chat systems, automation pipelines, coding assistants, and agent-based workflows where reliability matters more than raw benchmark dominance.

Developers who prioritize cost efficiency and integration simplicity will likely find it a strong mid-tier option.

Nano Banana 2 is available for testing and integration through Siray.ai’s unified API infrastructure.