How Seedance 2.0 Could Change AI Video Generation

The Current State of AI Video

AI video models are improving rapidly, but many developers still face limitations.

Common challenges include:

- short video duration

- limited style control

- inconsistent outputs

Because of these limitations, developers are constantly exploring new models that might improve the situation.

Seedance 2.0 has started to appear in these conversations.

What Seedance 2.0 Might Improve

While full details are still emerging, the model is rumored to focus on:

Longer Video Outputs

Longer generated clips are critical for storytelling and marketing content.

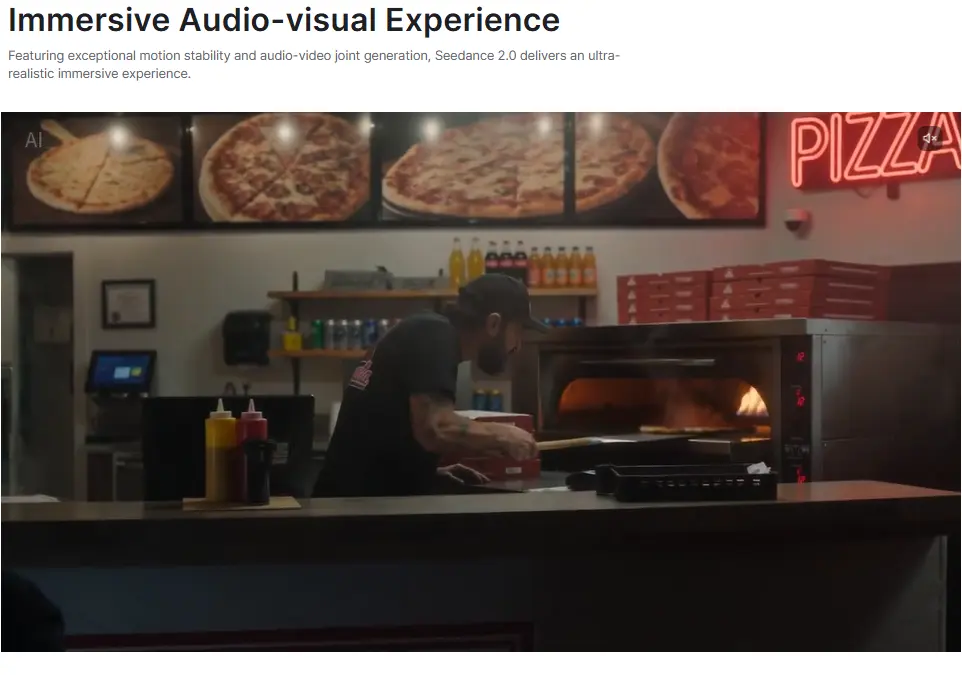

Better Scene Consistency

Maintaining visual consistency between frames is one of the hardest problems in video generation.

Newer architectures like Seedance 2.0 may address this challenge.

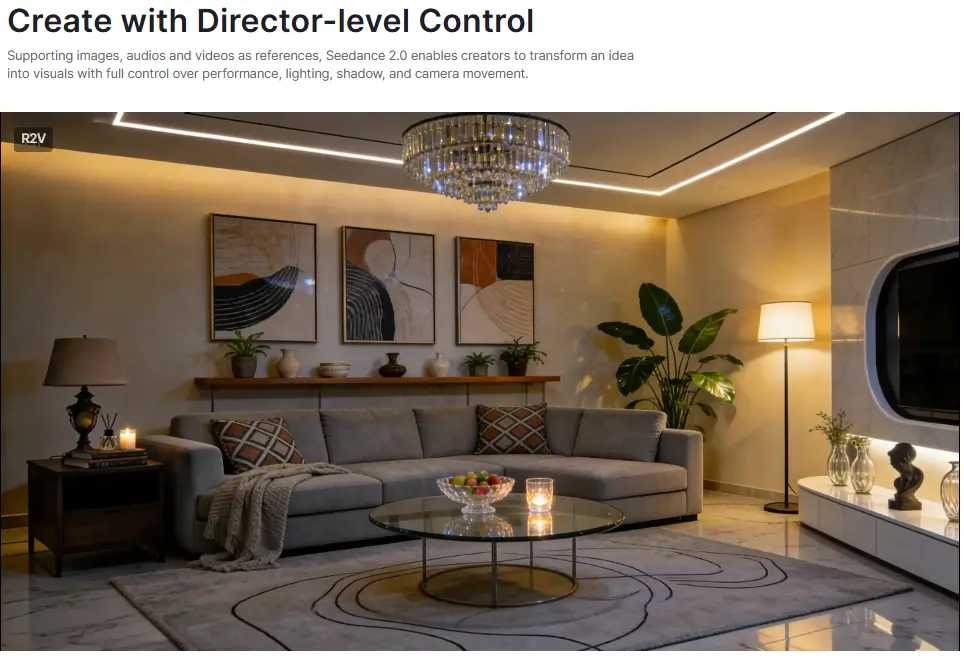

Better Prompt Interpretation

Like modern image models, video models are increasingly becoming instruction driven.

This allows users to describe scenes more clearly.、

Why Developers Are Interested

For developers building AI products, video generation is becoming an important feature.

Examples include:

- AI content platforms

- social media automation tools

- AI video editing software

If Seedance 2.0 delivers improvements in quality and stability, it could quickly gain adoption.

Join the Early Access Waitlist

We are currently exploring whether to integrate Seedance 2.0 video generation into the Siray API platform.

If you want early access once the integration becomes available, join the waitlist.